Empathy

In a recent piece of news, a dolphin distressed by a fish-hook sought out human divers to provide assistance. This, while heartwarming, has several distinct levels of astounding attached to it:

How did the dolphin know to ask people for help? This dolphin likely has very little personal history in receiving help from humans, and while we’re entirely capable of giving aid, how can it know that? This goes way beyond conditioning into actual intelligent action. The dolphin was able to actively request help from strangers.

How did the dolphin predict that they would even consider to help? The dolphin, somehow knowing that humans might be able to help it, had to have some sense of whether or not they would. This suggests, in my understanding, that the dolphin had a sense of not only its own pain, but the potential empathy that the divers might have towards it.

In short, what is remarkable about this case is that we have a reasonable example of a dolphin showing enough empathic recognition to identify the divers as significantly similar to itself, but how could it possibly do that?

Background

This is a considerably harder question than one might first think. Dr. Robin Murphy, a researcher here at Texas A&M University, has extensive work in the field of affective (emotional) robotics. One of her past research questions has dealt with human acceptance of robots, particularly in the case of emergency rescue.

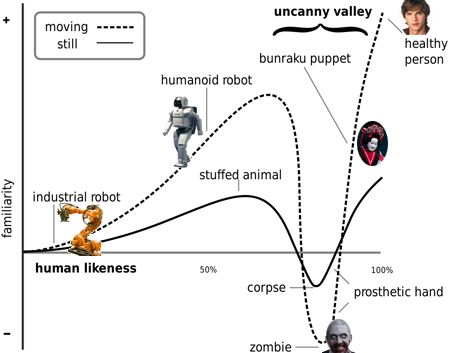

While affective robotics may seem initially silly, it possesses a distinct value. As people interact more and more with robots, we have to learn to trust them. Some of you may already be familiar with the uncanny valley, as it’s become a very popular discussion topic. For those unfamiliar with the idea, it’s the reason why robots such as Wall-E can be endearing while more “human” figures provoke a sense of revulsion. The real irony is that things can become more endearing by being less human and only possessing a few human-like qualities.

Why does it matter?

Where this becomes important is in the case of humans having to interact with robots. In the case of Dr. Murphy’s research, a search-and-rescue robot may be the only available form of help for a person trapped under the rubble of a collapsed building. If the victim is scared or put ill-at-ease by their rescuer, it can lead to non-cooperation and lead to potential harm.

Likewise, as our population ages, we’re needing more and more hands to help with the elderly. Many have suggested that robots may be ideal as twenty-four-hour caretakers. This might allow people who are desperately trying to maintain their independence and autonomy a way to handle things themselves without having to depend on another person. This has even served as the focus for the film Robot and Frank (which I haven’t seen, but looks hilarious). Part of the problem, however, is whether or not we’re capable of designing an assistant that fulfill both emotional and physical needs. Remember a basic rule for (affective/effective) design: if someone hates the product, they won’t use it.

This also becomes important with the large influx in the field of prosthetics. As we are gaining more and more ability to replace those ranges of performance that people have lost, we may be making them distinctly different and strange. How can we build technology that becomes both a comfortable extension of their own body and socially acceptable to those they’ll interact with day-to-day?

How does this apply?

What I find remarkable, in particular, about the dolphin case is that the dolphin, in a distressed state, actively recognized a being widely different in physical appearance as being similar.

What properties did the dolphin use to identify a potential helper? What similarities did the dolphin fixate on that let it ignore the differences?

If we could answer these questions, we’d be a bit further along in our pursuit of understanding what makes us (appear) human.

1 thought on “Empathy”